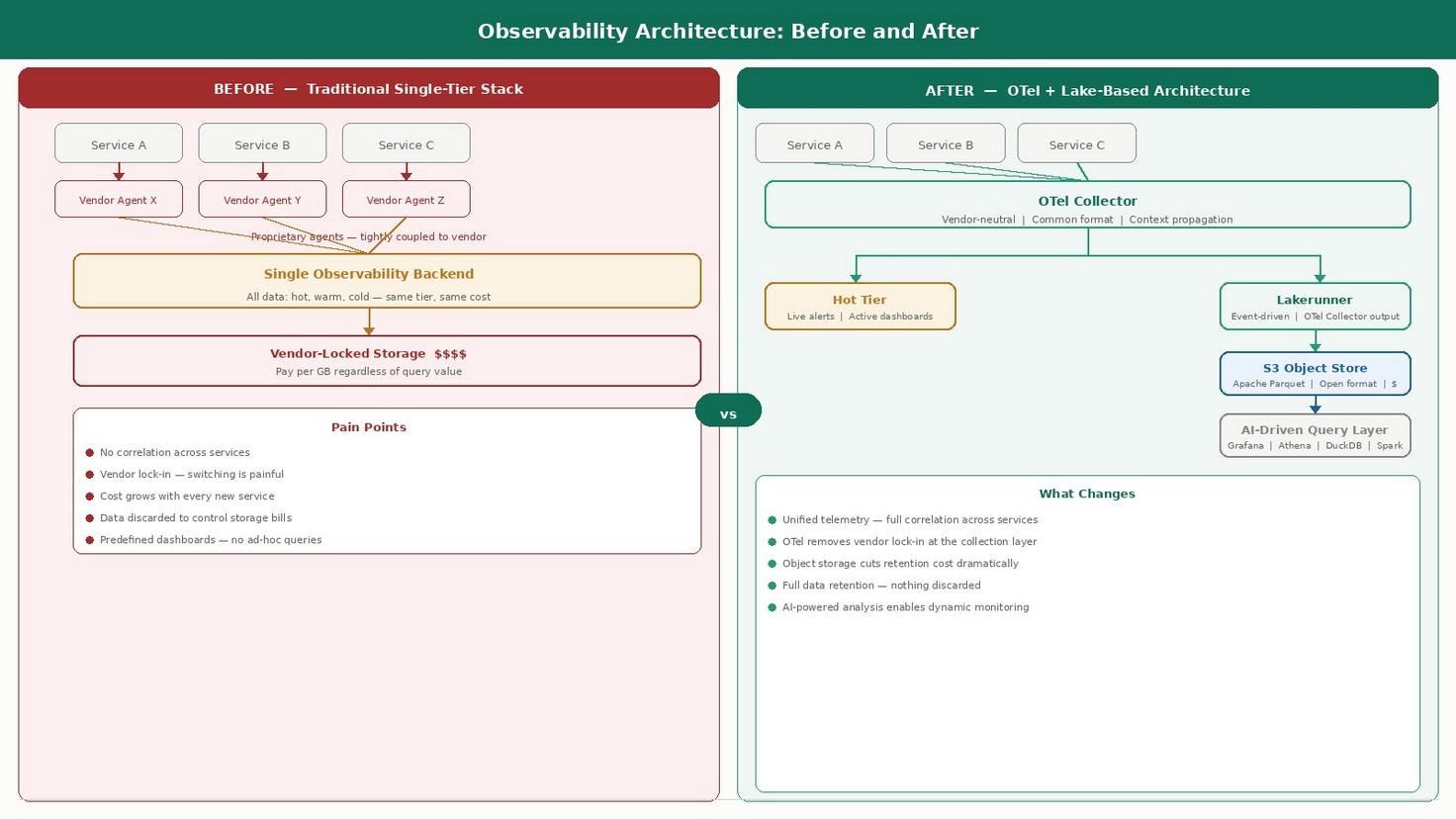

As enterprise environments grow more complex, the traditional approach to observability is showing its limits. The cost is unsustainable, the data is fragmented, and the insight does not match the investment. A new architectural pattern is emerging that changes all three.

In conversations with enterprise teams across Southeast Asia and India, we keep hearing a version of the same frustration. The observability stack has grown over the years. Multiple tools, multiple agents, dashboards that someone built eighteen months ago and nobody has touched since. The data volume keeps climbing. The clarity does not. At some point, the question stops being how do we collect more data and starts being why are we paying this much for so little insight. This blog is about what we think comes next.

THE CHALLENGE

Observability data is growing, but the insight is not keeping pace

Modern enterprises are generating more telemetry than at any point before. Every service, every container, every API call produces logs, metrics, and traces. On paper this should mean better visibility. In practice, most engineering teams will tell you the opposite is true.

The reason is fragmentation. In a microservices environment, each service tends to emit telemetry in its own format, routed to its own backend, with no shared context connecting it to the rest of the estate. When an incident occurs, engineers are not querying a unified system. They are manually correlating fragments across multiple tools, multiple formats, and multiple timelines. The data exists. The insight has to be assembled by hand, under pressure, in the middle of an outage.

This is not a minor inconvenience. It slows down incident response, burns engineering time on correlation work that should be automated, and means the investment in telemetry infrastructure is not translating into the operational clarity it should. The problem compounds with every new service added to the estate.

THE TRAP

Vendor lock-in on the collection layer is limiting more than just flexibility

Most enterprises did not choose vendor lock-in deliberately. They chose a collection agent that worked, a backend that integrated with it, and a visualisation layer that came bundled. Those were reasonable decisions at the time. Over years, they hardened into a dependency that is now very difficult and expensive to unwind.

The collection agent is embedded across hundreds of services. The data format is proprietary. Pipelines and alerting rules are built around one platform’s assumptions. Switching is theoretically possible but practically it never reaches the top of the priority list because the cost and effort are so high. So the dependency stays, absorbs annual price increases, and accumulates technical debt quietly in the background.

What makes this particularly costly is that it locks the cost structure, too. As data volumes grow, spend grows with them. There is no leverage to negotiate and no practical path to a different model. The flexibility problem and the cost problem are the same problem, rooted in the same architectural decision made years earlier.

THE COST REALITY

Observability storage is becoming a material cost problem for enterprise IT

Traditional observability pricing was designed for a different era of data volumes. At modest scale, paying per GB ingested and stored is manageable. At enterprise scale, with continuous deployments, high-cardinality telemetry, and retention requirements measured in months, it becomes a line item that finance teams start questioning.

The structural issue is that cost grows regardless of whether the data is useful. Debug logs from deprecated services, health check traces firing every few seconds, duplicate telemetry from overlapping instrumentation. All stored at the same cost per byte as the signals that actually drive decisions. According to research from Observe Inc, 46% of organisations have discarded telemetry data based solely on cost. Separately, OneUptime’s analysis found observability now consumes 17% percent of total infrastructure budgets, and the Grafana 2025 Observability Survey found 37% of engineering leaders already consider those costs too high.

When nearly half of all organisations are discarding telemetry because they cannot afford to keep it, the observability infrastructure is working against the goal it was built to serve. Patching this with better dashboards or more aggressive sampling does not address the root cause.

THE ARCHITECTURAL SHIFT

OpenTelemetry breaks the collection lock-in and creates a unified data layer

OpenTelemetry was built to address this set of problems. It is a vendor-neutral, open source framework for collecting logs, metrics, and traces, standardised under the Cloud Native Computing Foundation and supported across the vast majority of observability platforms, cloud providers, and language runtimes in production use today.

The shift OTel enables is architectural. Instead of the collection layer being tied to a vendor, it becomes a standard. Every service emits telemetry in a common format. The collector routes it wherever it needs to go. If the backend changes next year, the instrumentation does not. The lock-in dissolves because the coupling at the collection layer is removed.

More importantly, OTel addresses the fragmentation problem directly. When every service emits telemetry in the same format with the same context propagation, correlation across services becomes a query rather than a manual investigation. A single trace ID connects a user request from the frontend through every microservice it touched, through every log line it generated, to the database call that caused the slowdown. That connected view is what unified observability actually means in practice.

THE STORAGE AND INTELLIGENCE ANSWER

Cardinal and object storage: solving cost, retention, and the intelligence gap together

OpenTelemetry solves collection and correlation. It does not solve storage cost or tell you what to do with the data once you have it. For storage, the direction the industry is moving is clear: telemetry should land in S3-compatible object storage, at commodity pricing, in an open format your organisation owns outright — without being metered, sampled, or held in a vendor’s black box.

Cardinal, built by ex-Netflix engineers and backed by CRV, is one of the clearest examples of this architectural pattern done right. The flow works in three steps, all inside your own VPC. Standard OTel collectors ship logs, metrics, and traces directly to your object storage. Cardinal Lakerunner — an open-source observability data lake — then indexes that data in place at sub-second query latency, with native Grafana integration for dashboards and Kubernetes-native autoscaling for production workloads. There is no separate expensive backend to maintain alongside it. Lakerunner is the production-grade observability stack, at object storage economics, without discarding a single event.

What changes the nature of incident response entirely is Cardinal’s Agent Runtime layered on top. Rather than engineers clicking through dashboards to reconstruct what happened, agents handle the investigation. Composite troubleshooting skills — an Outlier Detector that decomposes metric spikes across every tag combination, a Correlation Finder that aligns deploys against metric timelines, an Error Summarizer that clusters raw errors into ranked groups — fire hundreds of queries against the data lake in seconds and return evidence-backed answers through Slack and other integrations your team already uses. Agents using Claude, ChatGPT, Cursor, or internal models connect through the Cardinal Agent Runtime without query rate limits or per-seat costs constraining how deep the investigation goes.

That shift — from engineers navigating dashboards under pressure, to agents proactively detecting regressions and returning ranked causes before they become customer-visible problems — is the real value of the lake-based observability pattern.

THE PAYOFF

This is what finally makes dynamic monitoring possible at enterprise scale

The reason predefined dashboards have always felt inadequate is not that they were built wrong. It is that they were a workaround for a broken infrastructure. When data was fragmented across systems, vendor-locked at the collection layer, and too expensive to retain in full, teams built fixed views in advance because ad-hoc querying at scale was not feasible. The dashboard was compensation for an architecture that could not support real questions.

Once the infrastructure is fixed, the workaround becomes unnecessary. When telemetry is collected through a unified vendor-neutral layer, stored in an open format on object storage, and queryable by AI-capable tooling, the nature of monitoring changes. Teams are no longer constrained by what someone thought to visualise last quarter. They can ask any question against the full telemetry history, without having pre-built the view, and get an answer in seconds rather than hours of manual correlation.

This is what dynamic monitoring actually means. Not a better dashboard product. A different relationship between engineering teams and their operational data. The data volume and complexity of a modern microservices estate, which used to make monitoring harder, becomes the foundation for a quality of operational intelligence that was not achievable before.