As pressure mounts to fast-track AI across the enterprise, the real opportunity is not in rewriting mission-critical systems but in modernizing the layers around them: access, data availability and control.

Over the past several months, one pattern has become impossible to miss in conversations with CIOs.

The pressure to accelerate AIfication is now real.

Boards want visible progress. Business functions want faster automation. Technology teams are being pushed to show how AI will reshape operations, customer engagement and decision-making. The demand feels urgent, broad and at times relentless.

In many ways, it resembles an earlier enterprise movement: Appification.

There was a time when enterprises were under pressure to modernize their applications so they behaved more like consumer platforms—intuitive, responsive, mobile-first and socially inspired. Today, that same pressure has evolved. The expectation is no longer just to modernize user experience. It is to make systems AI-aware, AI-assisted and AI-accelerated.

That sounds compelling in the boardroom. It becomes much harder inside the data center.

Because once the conversation moves from ambition to architecture, a practical reality appears.

There is only so much you can safely change inside a core enterprise application.

In large banks, telecom environments and other mission-critical enterprises, the core application is often deeply entangled with business logic, compliance requirements, operational dependencies and years of accumulated customization. It runs billing, servicing, customer onboarding, transactions, reconciliation or network operations. Touching it is never a casual decision.

At this stage, in many organizations, it is neither advisable nor even feasible to make deep changes to that core layer merely to satisfy the AI agenda.

Sometimes the risk is too high. Sometimes the skills are not available. Sometimes the institutional understanding of the application has faded over time. And often, all three are true at once.

That is why the most practical AI strategy for enterprises is not to start by changing the core application.

It is to start by modernizing the architecture around it.

The Wrong Question

Many enterprise AI programs begin with the wrong question:

How do we embed AI into the application?

That often leads teams toward expensive, risky and slow-moving transformation paths. It assumes the application itself must become the primary vehicle of AI change.

A better question is this:

How do we make the application AI-accessible, operationally observable and data-ready without destabilizing it?

That changes everything.

It shifts AI from being a rewrite agenda to being an architecture agenda.

And once that shift happens, a much more realistic path appears.

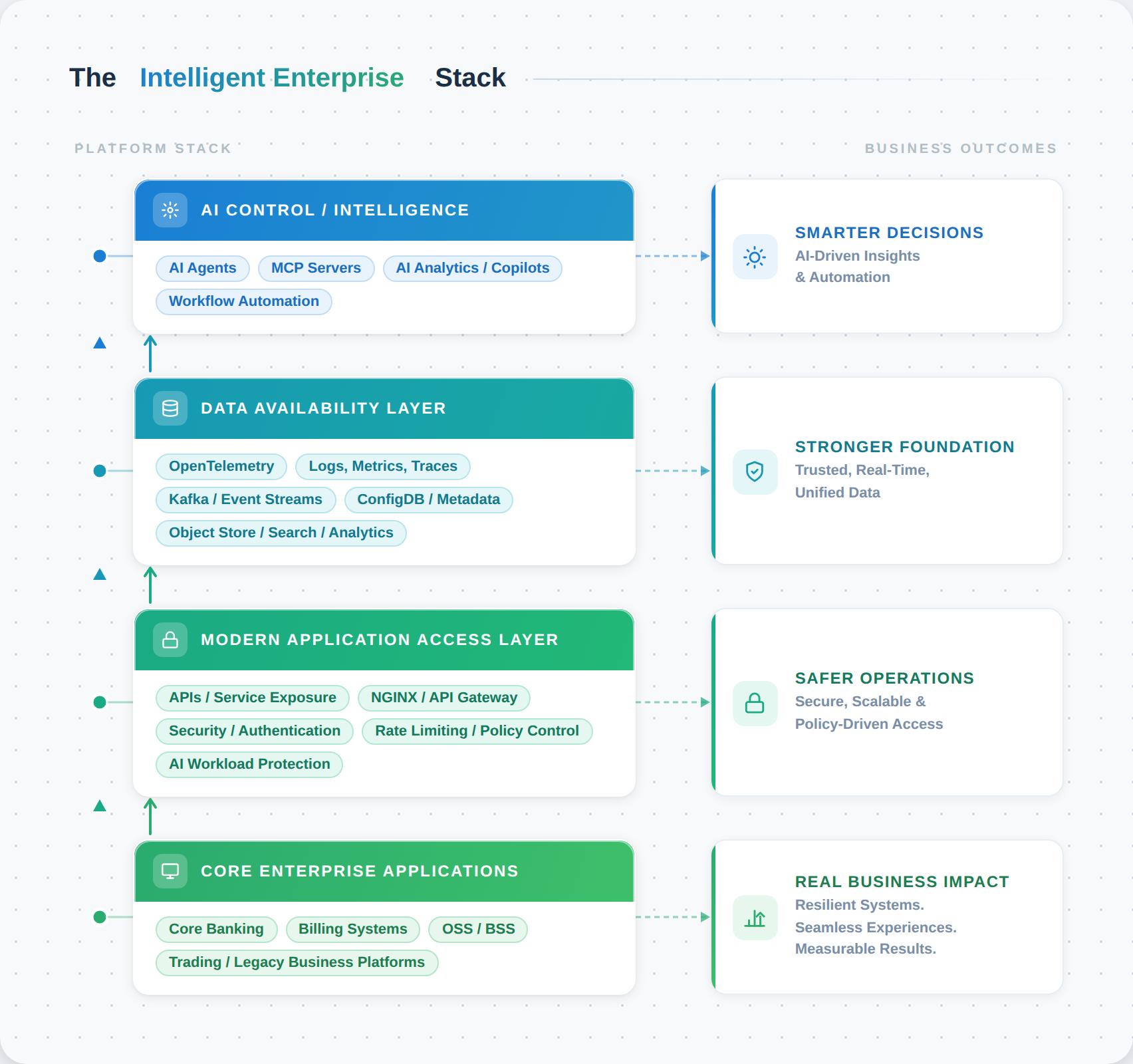

The Three-Layer Approach to Enterprise AIfication

For many CIOs, the safer and smarter route is to modernize three layers around the core system.

1. Modernize the access layer

Before AI can assist, automate or analyze, it needs governed access to business capabilities.

In many enterprises, application access is still fragmented across legacy interfaces, point integrations, brittle connectors or tightly coupled service paths. This makes AI adoption difficult not because the models are weak, but because the enterprise lacks a clean, policy-driven interaction layer.

Modernizing this layer means introducing:

- modern APIs and service exposure

- traffic control and routing

- identity-aware access

- policy enforcement

- workload isolation for AI consumers

- protection for internal services and models

This is where technologies like NGINX become strategically important. Not merely as reverse proxies or load balancers, but as control points for securing and governing how modern applications, services, agents and AI workloads interact with enterprise systems.

In other words, before AI becomes intelligent, access must become modern.

2. Modernize the data availability layer

AI does not create value in a vacuum. It creates value when the right data becomes discoverable, usable and timely.

The challenge in most enterprises is not just lack of data. It is lack of data availability in operationally useful form.

That means modernizing the pathways through which data becomes available from systems, infrastructure and operational environments. It often includes:

- telemetry from applications and platforms through OpenTelemetry

- logs, metrics and traces flowing into scalable observability architectures

- event streams through technologies like Kafka

- metadata and environment context from configuration databases

- historical and analytical storage in object stores

- search and analytics engines that make data explorable

This layer is critical because it creates the operational fabric on which AI can work. Without it, AI remains disconnected from reality. With it, AI can analyze behavior, detect anomalies, understand topology, enrich context and support human decision-making.

3. Introduce an AI control and intelligence layer

Once access is modernized and data is made available, the organization can introduce AI in a much more controlled and meaningful way.

This is where we increasingly see the role of:

- enterprise-hosted MCP servers

- AI agents

- AI-driven analytics

- operational copilots

- automation workflows

- policy-bound orchestration services

This control layer should not be confused with putting AI everywhere. In fact, its strength comes from doing the opposite. It creates a governed intelligence layer that sits above the operational environment, consumes trusted signals and applies AI where it adds value.

This is a much better enterprise pattern than blindly embedding AI inside every application component.

Architecture: AI-Enabling the Enterprise Without Rewriting the Core

What This Looks Like in Practice

This is not theoretical.

In one BFSI engagement, the challenge was not whether AI could be useful. It was how to introduce AI-enablement without destabilizing a business-critical environment.

The answer was not to change the core application itself.

The program focused instead on:

- modernizing the access mechanism to the application

- creating a more structured and modern operational access layer

- building a configuration intelligence database

- introducing an organizationally hosted MCP server architecture

- enabling AI to interact through governed metadata, system context and controlled interfaces

The effect was significant. The enterprise created a practical path to AI without taking unnecessary risk inside the transaction-bearing application core.

In another engagement with a telecom customer, the immediate need was not application transformation but observability modernization.

Here the architecture focused on:

- OpenTelemetry-based telemetry collection

- object-store-backed scalable data retention

- modern analytics on top of operational data

- AI-based analysis to improve visibility, correlation and insight generation

This created a more future-ready observability stack—one that was not only more scalable, but also far more suitable for AI-assisted operations.

In parallel, security for AI-facing workloads and modern traffic patterns was reinforced through architecture choices involvingNGINX in the core design.

These examples point to a larger lesson.

AI transformation often succeeds faster when it is built around access, observability, metadata and control planes—rather than around direct changes to the core application itself.

Why This Matters Especially for CIOs

For CIOs, this approach does more than reduce technical risk. It solves a political and operational problem as well.

Every CIO today is balancing four tensions at once:

- pressure to move faster on AI

- pressure to avoid operational instability

- pressure to work within real skill constraints

- pressure to show measurable progress without overcommitting capital

A core rewrite rarely satisfies all four.

An architecture-led enablement strategy often does.

It allows the enterprise to move incrementally. It supports phased adoption. It creates reusable digital infrastructure. It reduces dependency on one application team or one vendor. Most importantly, it creates options.

And in enterprise transformation, options matter.

Why Open Source Has a Natural Advantage Here

This is also where open source becomes strategically powerful.

The architecture required for practical enterprise AIfication is not built around a single monolithic platform. It is built around interoperable components across access, telemetry, streaming, analytics and control.

That is exactly where open-source ecosystems are strong.

Whether it is:

- OpenTelemetry for instrumentation and observability

- Kafka for data movement and event streaming

- object storage for scalable retention

- search and analytics platforms for exploration

- NGINX for access control and AI workload protection

- MCP-oriented architectures for model interaction and control

the value lies in flexibility, openness and composability.

For CIOs wary of being trapped in high-cost, one-way AI platforms, that matters enormously.

Open source does not merely reduce license cost. In this context, it preserves architectural freedom.

The Shift in Thinking CIOs Need to Make

If there is one mindset shift that matters now, it is this:

AI readiness should be treated as an architectural capability, not an application feature.

That distinction is crucial.

- Applications may remain stable.

- Interfaces can become modern.

- Data can become available.

- Observability can become intelligent.

- Control layers can become AI-enabled.

And all of this can happen without reckless intervention into systems that already carry the weight of the business.

That is not a compromise.

It is often the most mature path forward.

The Road Ahead

The enterprises that succeed in AI will not necessarily be the ones that rebuild the most.

They will be the ones that understand where not to intervene, where to modernize around the core and how to create intelligent layers of access, data and control on top of what already works.

That is where practical AIfication begins.

For technology leaders, the challenge is no longer whether AI matters. It does. The challenge is how to adopt it in a way that respects the realities of enterprise architecture. In my experience, the most durable path is not to force AI into fragile cores, but to modernize the layers that surround them—access, observability, metadata and control. That is where enterprise-scale AI becomes practical.