Modern Kubernetes networking demands more than what the original Ingress resource was designed to offer. NGINX Gateway Fabric takes a fundamentally different approach — one built around a clean separation of concerns, declarative resource ownership, and a battle-tested NGINX data plane. Here is a thorough look at how it all fits together.

Why Architecture Matters for a Gateway

For years, the standard way to route traffic into a Kubernetes cluster was through the Ingress resource. It worked, but it came with a hidden cost: the Ingress spec was intentionally minimal, meaning almost every interesting feature — rate limiting, header rewriting, canary deployments, authentication — ended up being implemented as vendor-specific annotations. Your configuration was no longer portable. Your platform team and application developers shared the same resource. And scaling the controller meant scaling everything together.

NGINX Gateway Fabric was built to address these structural problems and, in its 2.x architecture, introduced a clean separation of control and data planes. It is NGINX’s official implementation of the Kubernetes Gateway API — a newer, role-aware, and far more expressive networking standard. But to understand why it solves these problems so cleanly, you have to understand its internal architecture: specifically, how the control plane and data plane are separated, how they communicate, and what that separation makes possible.

The Core Principle: Separating Control from Data

Before NGINX Gateway Fabric 2.0, many Kubernetes gateway implementations — including earlier versions of various NGINX controllers — ran the control plane and the data plane together inside a single pod. This was simple to deploy, but it created tight coupling. If you wanted to scale your proxy to handle more traffic, you were forced to scale the configuration manager alongside it, even if it did not need extra capacity. Worse, a bug or crash in one component could affect the other.

NGINX Gateway Fabric makes a deliberate architectural choice: the control plane and the data plane are separate Kubernetes Deployments with their own independent lifecycles.

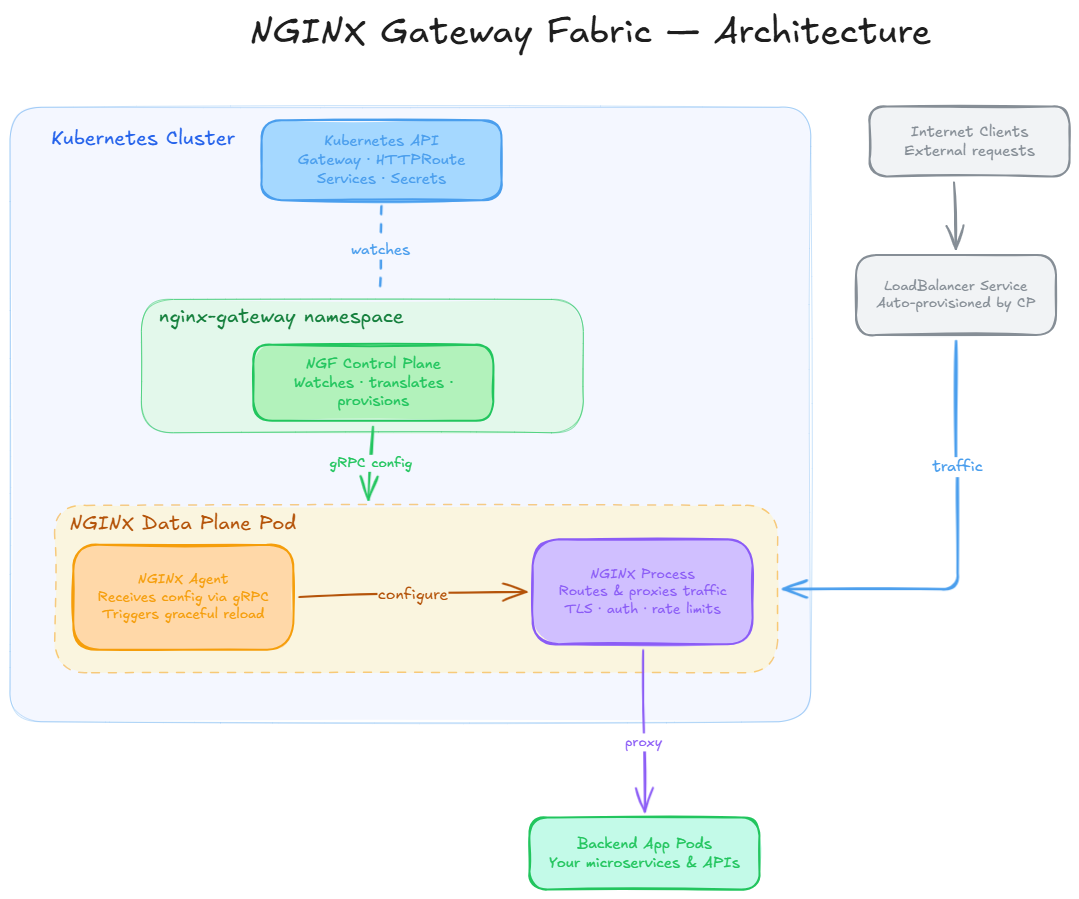

- The control plane is responsible for watching Kubernetes API resources, translating them into NGINX configuration, and delivering that configuration to the data plane.

- The data plane is responsible for actually handling network traffic — receiving requests, applying routing rules, enforcing policies, and forwarding to backend services.

Neither plane is aware of the other’s internal workings. They communicate through a well-defined interface. This is not just a performance optimisation — it is the foundation that makes the entire NGINX Gateway Fabric architecture secure, scalable, and resilient.

Figure 1: NGINX Gateway Fabric runtime architecture. The control plane and data plane are separate deployments. The NGF Control Plane watches Kubernetes API resources and delivers configuration to the NGINX Agent inside each data plane pod via a secure gRPC connection. Internet traffic enters through a LoadBalancer Service provisioned automatically by the control plane, passes through the NGINX Process, and is proxied to backend app pods.

The Control Plane: The Brain of the Operation

The NGF Control Plane is a standard Kubernetes controller, built on top of the controller-runtime library — the same foundation used by the Kubernetes Operator Framework. It runs as a Deployment in its own dedicated namespace (typically nginx-gateway) and has exactly one responsibility: maintaining the desired state of your NGINX data planes by continuously reconciling Kubernetes API resources.

What it watches

The control plane establishes watch connections to the Kubernetes API server and listens for changes to a specific set of resources:

- Gateway API resources such as GatewayClass, Gateway, and supported route types including HTTPRoute, GRPCRoute, TCPRoute, TLSRoute, and UDPRoute

- Core Kubernetes resources: Services, Endpoints, and Secrets (for TLS certificates), along with other related objects that affect routing and backend resolution

- NGF custom resources and policies: Resources that shape data-plane behaviour, such as NginxProxy for data-plane tuning and the various policy attachments supported in the installed version

Every time any of these resources changes — whether an application developer adds a new HTTPRoute or an operator modifies a TLS secret — the control plane is notified, re-computes the NGINX configuration, and pushes the update to the affected data plane pods. This reconciliation loop is the heartbeat of the system.

What it produces

The translation from Gateway API resources to NGINX configuration happens entirely inside the control plane. A routing rule expressed in the language of HTTPRoute matchers and backend references becomes an nginx.conf block with upstream definitions, location blocks, and proxy directives. Application developers and cluster operators never need to write a single line of NGINX configuration directly.

Secure communication with the data plane

The control plane does not mount shared volumes or rely on Unix signals to push configuration to NGINX — approaches that were common in older architectures but that required both planes to be co-located in the same pod. Instead, it uses a gRPC connection to the NGINX Agent running inside each data plane pod. By default, this connection is encrypted with self-signed TLS certificates generated at installation time. Integration with cert-manager is supported for environments that require externally managed certificates.

The Data Plane: NGINX Doing What It Does Best

The data plane is where all network traffic flows. It runs as a separate Kubernetes Deployment (or DaemonSet, depending on your deployment preference) and contains exactly one type of pod. Each data plane pod has two tightly integrated processes: the NGINX Agent and the NGINX Process.

NGINX Agent

The NGINX Agent is a lightweight process responsible for the management layer inside each data plane pod. It maintains a persistent gRPC connection to the NGF Control Plane and listens for configuration updates. When the control plane sends a new NGINX configuration, the agent is responsible for writing it to disk and triggering a graceful reload of NGINX — without requiring a pod restart or any shared volume between the control and data planes.

For users running NGINX Plus, the NGINX Agent additionally interfaces with the NGINX Plus API. This enables dynamic upstream updates — when backend pods scale up or down, new endpoints can be injected into NGINX’s active upstream pool without any reload at all. This is a significant advantage in high-throughput environments where even graceful reloads introduce micro-latency.

NGINX Process

The NGINX Process is the traffic engine — the same high-performance, battle-hardened NGINX that powers a significant portion of the world’s web infrastructure. It handles TLS termination, HTTP/2 and gRPC proxying, load balancing across backend pods, rate limiting, header manipulation, access logging, and every other traffic management function. The policies defined in Gateway API resources and NGF policy extensions are ultimately expressed as NGINX directives inside this process.

The separation between the Agent and the Process is intentional. Traffic handling is decoupled from configuration management. If the control plane goes down or becomes temporarily unavailable, the NGINX Process continues to serve traffic using the last known good configuration. Active connections are not dropped. This is a critical property for production workloads.

Dynamic Provisioning: Gateways on Demand

One of the most operationally elegant aspects of NGINX Gateway Fabric is how it creates data plane instances. You do not pre-provision NGINX pods. You do not configure them with IP addresses upfront. You declare a Gateway resource, and the control plane takes care of the rest.

When a cluster operator creates a Gateway object in Kubernetes, the NGF Control Plane:

- Validates the Gateway against the GatewayClass it references (the nginx class by default)

- Provisions a new NGINX Deployment in the same namespace as the Gateway

- Creates a LoadBalancer Service (or NodePort / ClusterIP, depending on configuration) to expose the NGINX pods externally

- Begins watching for HTTPRoute, GRPCRoute, and other route resources that reference this Gateway

- Continuously reconciles the NGINX configuration as routes and policies are added or modified

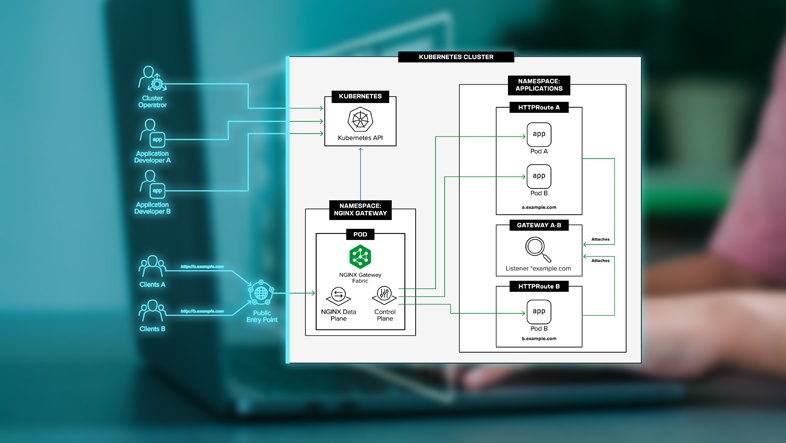

This 1:1 relationship between a Gateway resource and a dedicated NGINX Deployment is what makes multi-tenant and multi-team deployments clean and safe. Each Gateway has its own NGINX process, its own LoadBalancer IP, and its own configuration namespace.

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: production-gateway

namespace: team-alpha

spec:

gatewayClassName: nginx

listeners:

- name: https

port: 443

protocol: HTTPS

tls:

mode: Terminate

certificateRefs:

- name: team-alpha-tls-certWith this single declaration, NGINX Gateway Fabric will provision a fully configured NGINX deployment with TLS termination, ready to receive HTTPRoute attachments from Team Alpha’s application developers — without the platform team needing to touch it again.

The Role-Based Model: Who Owns What

The Gateway API was designed with organisational reality in mind. In most enterprises, a platform or infrastructure team is responsible for the gateway infrastructure, while multiple development teams are responsible for routing their own services. The old Ingress model forced both groups to share a single resource, which led to RBAC headaches, annotation sprawl, and configuration conflicts.

NGINX Gateway Fabric cleanly maps to three distinct roles:

This delegation model is enforced through standard Kubernetes RBAC. A developer with access to create HTTPRoute resources in their namespace cannot create Gateway objects or modify the GatewayClass. The separation is not just organisational convention — it is enforced by the Kubernetes API server itself.

Multi-Gateway Architecture: Isolation at Scale

A single NGF Control Plane can manage multiple Gateway objects — and therefore multiple independent NGINX Deployments — within the same cluster. This is one of the most powerful patterns NGINX Gateway Fabric enables.

Common use cases include:

- Team isolation: Each product team gets its own Gateway with its own external IP. A misconfiguration in one team’s routes cannot affect another team’s traffic.

- Environment separation: Production and staging gateways coexist in the same cluster with different TLS certificates, different rate limit policies, and independently scalable NGINX deployments.

- SLA tiers: A premium API gateway can run on dedicated nodes with more NGINX replicas, while internal tooling routes through a smaller, cost-optimised gateway.

- Protocol separation: One Gateway handles HTTPS traffic; another handles TCP/UDP for non-HTTP workloads.

Each Gateway scales its data plane independently. If Team Alpha’s services suddenly receive ten times the traffic, you can scale the NGINX Deployment backing their Gateway without affecting any other team. The control plane itself can also be horizontally scaled — with leader election ensuring only one pod writes status updates to Gateway API resources, while all replicas can push configuration to data planes.

Unlocking More Power with NGINX Plus

Everything described so far applies equally to both NGINX Open Source and NGINX Plus. But for enterprises with strict uptime and performance requirements, NGINX Plus unlocks additional capabilities through the NGINX Plus API:

- Dynamic upstream updates: When Kubernetes scales your backend pods, changes are pushed to NGINX’s active upstream configuration via the API — no reload required. This eliminates the latency spike that can occur during reloads on high-throughput deployments.

- Advanced session persistence: Cookie-based sticky sessions for stateful application workloads.

- Real-time metrics and monitoring: Detailed per-upstream, per-server, and per-zone metrics exposed through the NGINX Plus dashboard and API, integrable with your observability stack.

- JWT authentication, OIDC, and CORS: Enterprise security features that can be enforced at the gateway layer, removing authentication logic from individual application services.

As an authorised NGINX partner, Ashnik can help you evaluate whether NGINX Open Source or NGINX Plus is the right fit for your workloads — and guide you through licensing, deployment, and ongoing support.

Observability: Built for Modern Platform Teams

NGINX Gateway Fabric integrates naturally with standard observability stacks. It exposes Prometheus-format metrics for monitoring — covering request rates, upstream response times, active connections, and TLS handshake data — and the control plane emits structured logs that feed into ELK, OpenSearch, or any log aggregator.

OpenTelemetry-based distributed tracing is also supported, enabling end-to-end trace propagation from the gateway to your backend services. Tracing is configured through NGF observability features such as ObservabilityPolicy, with broader data-plane defaults and overrides available through NginxProxy. For Grafana users, NGINX provides a sample dashboard JSON that teams can import and extend for their own monitoring needs.

Conclusion: Architecture as a Feature

NGINX Gateway Fabric is not simply a new way to write Ingress rules. The separation of control plane and data plane, the gRPC-based configuration delivery, the dynamic provisioning model, and the role-aware resource hierarchy are all deliberate architectural decisions that make it materially easier to operate Kubernetes networking at enterprise scale.

For platform teams managing multiple development teams on shared clusters, the multi-gateway and RBAC model is a significant operational simplification. For SREs responsible for uptime, the fault isolation between planes is a meaningful improvement in resilience. For security teams, the clear separation of concerns and policy enforcement at the gateway layer addresses requirements that annotation-based Ingress controllers could never cleanly satisfy.

Understanding this architecture is the starting point for deploying NGINX Gateway Fabric correctly — sizing your control plane appropriately, structuring your Gateway topology to match your team structure, and making informed decisions about NGINX Open Source versus NGINX Plus.