The enterprise AI narrative is dangerously misdirected.

Every conference debate circles around model size, inference cost, prompt engineering, agent frameworks.

That is not where enterprise AI breaks.

It breaks at the system boundary.

Inside most serious enterprises, AI already works. Code is written faster. Risk scenarios are evaluated in minutes. Support tickets are summarized instantly.

The pilot succeeds.

Then it stalls.

Not because the model is weak.

Because the architecture is.

And most organizations are trying to bolt intelligence onto platforms that were never designed for it.

The Lie We Tell Ourselves: “We’ll Scale After the Pilot”

This is the most expensive assumption in enterprise AI.

“We’ll prove the use case first.”

“We’ll harden it later.”

Later rarely comes.

Because the moment AI influences a real workflow — pricing, risk scoring, customer communication, operational automation — it inherits production expectations:

- Traceability

- Governance

- Reliability

- Latency guarantees

- Security boundaries

Scaling AI is not a later phase.

It is an architectural decision made on day one.

Where Enterprise AI Actually Breaks

AI pilots succeed at the edge.

Enterprise systems operate at the core.

The collision happens in four places:

- Data Freshness vs Model Confidence

AI outputs look confident even when data is stale.

If ingestion pipelines lag, if schemas drift, if lineage is unclear — the system does not fail loudly.

It degrades silently.

Most AI issues at scale are not intelligence failures.

They are pipeline failures.

- Observability Blind Spots

Traditional monitoring tells you CPU usage

It does not tell you:

- Why model outputs are shifting

- Whether certain customer segments receive different outcomes

- If latency is compounding across data + inference layers

- Whether retrieval quality is degrading over time

If AI behavior is not observable across logs, metrics, traces and data flow — it cannot be governed.

And if it cannot be governed, it cannot be trusted.

- Ownership Vacuum

During a pilot, ownership is clear: the innovation team.

In production, who owns it?

Platform engineering?

Data engineering?

Security?

Application teams?When AI spans data pipelines, storage tiers, APIs and application logic, ownership fragmentation becomes a structural risk.

- Infrastructure Designed for Static Workloads

Most enterprise systems were designed around deterministic applications.

AI systems are probabilistic, dynamic and data-hungry.

They demand:

- High-throughput ingestion

- Real-time indexing

- Scalable storage tiers

- Low-latency retrieval

- End-to-end visibility

If the underlying platform cannot sustain that flow, AI becomes brittle.

The Architecture Reality

Enterprise AI at scale is not a model problem.

- It is a data flow problem.

- It is an observability problem.

- It is a control plane problem.

Below is what actually separates scalable AI from stalled AI.

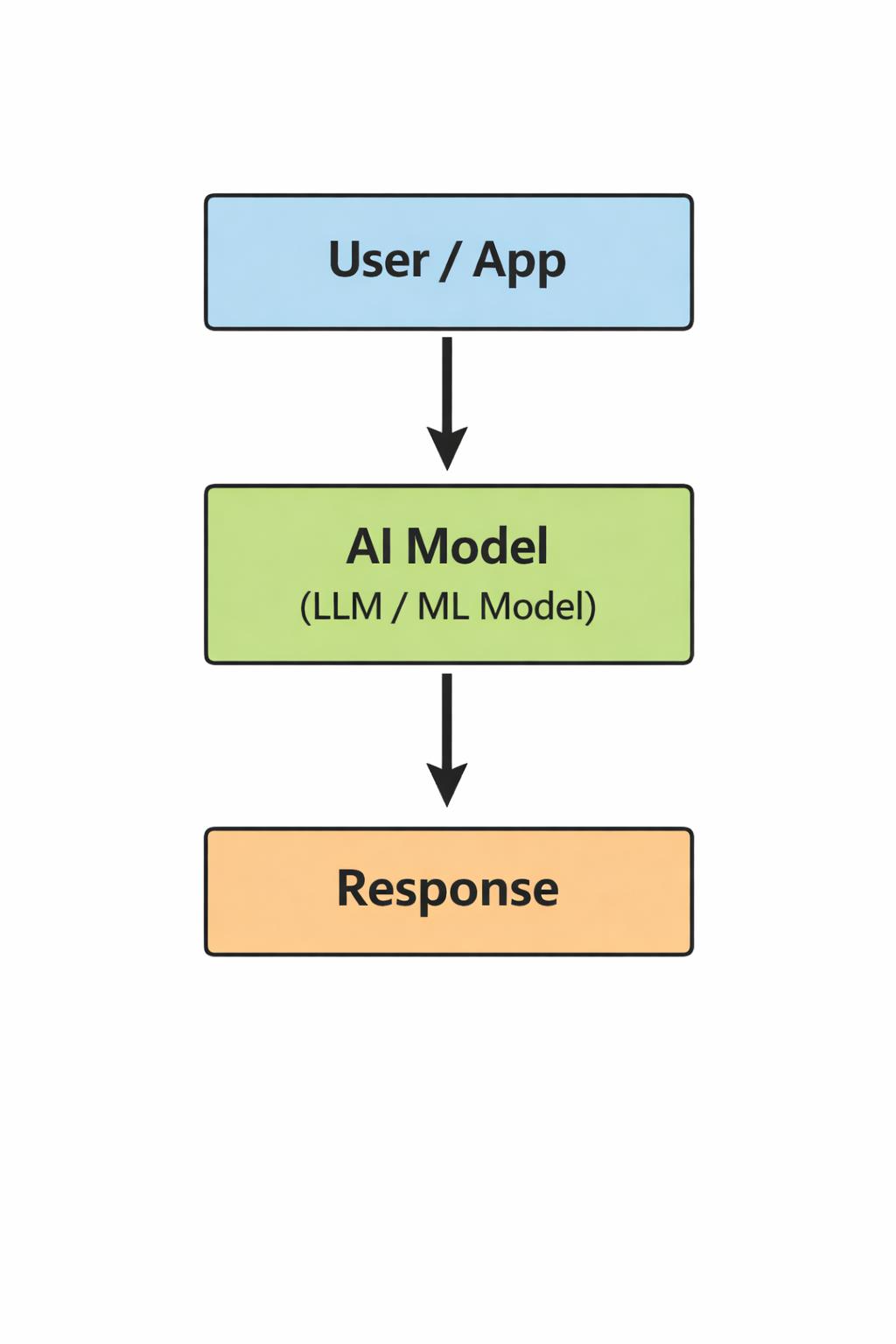

Diagram 1: The Pilot View (How Most Enterprises Think About AI)

- This is how pilots are conceptualized.

- The model is central. Everything else is peripheral.

- It works in isolation.

- It collapses at scale.

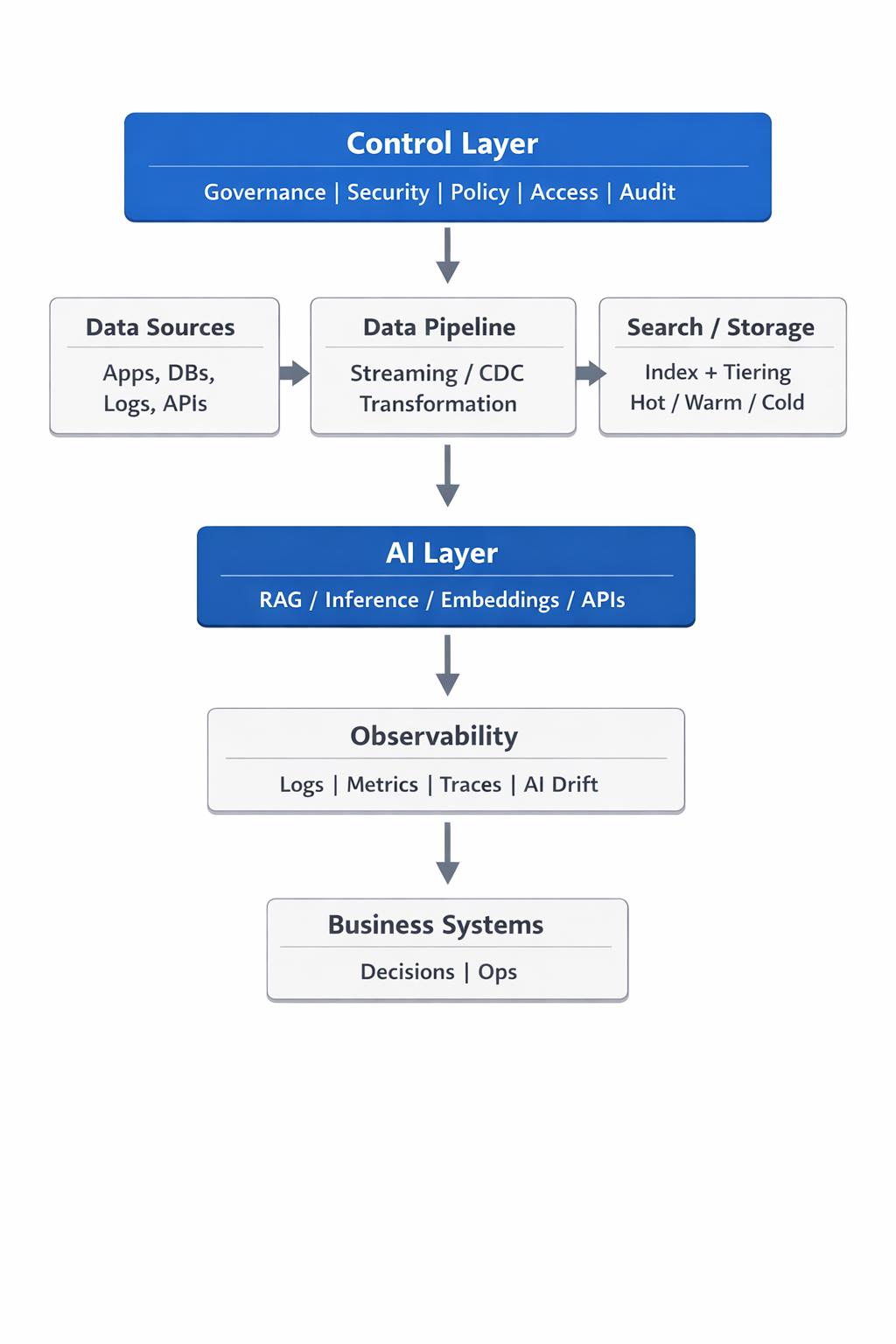

Diagram 2: The Production Reality (What AI Actually Requires)

In production, the model is only one layer in a much larger system.

And that system determines whether AI survives contact with reality.

The Uncomfortable Truth

Enterprises that struggle with AI adoption often blame:

- Vendor limitations

- Model immaturity

- Regulatory friction

In reality, the constraint is architectural readiness.

Organizations with:

- Mature streaming data pipelines

- Unified observability across infrastructure and applications

- Searchable, scalable storage tiers

- Open, adaptable platform foundations

move from pilot to production far more smoothly.

Not because their models are better.

Because their execution layer is stronger.

Why Open Foundations Matter More in AI

AI systems evolve rapidly.

Models change. Retrieval patterns change. Data sources multiply.

Closed, rigid platforms amplify lock-in and reduce visibility precisely when adaptability is required.

Open foundations — in data pipelines, search platforms, observability frameworks — provide structural flexibility when intelligence becomes part of core systems.

At scale, adaptability is not ideology.

It is risk management.

A More Contrarian View of Enterprise AI Maturity

AI maturity is not measured by:

- Number of copilots deployed

- Number of models in production

- Percentage of teams using AI tools

It is measured by:

- Data pipeline reliability under AI-driven load

- End-to-end decision traceability

- Unified observability across model + infrastructure

- Controlled rollout and rollback capability

- Governance embedded in architecture

Most organizations are experimenting with AI.

Few are engineering for it.

What Will Separate Winners From the Rest

The next phase of enterprise AI will not reward experimentation.

It will reward platform discipline.

The enterprises that treat AI as:

- An extension of their data platform

- A consumer of high-quality, governed streams

- A workload that demands observability parity with critical systems

will move ahead quietly and sustainably.

The rest will continue to demo.

And call it transformation.

The Architectural Bottom Line

AI is not a feature you add.

It is a workload you must architect for.

The model is visible.

The execution layer is invisible.

And in enterprise systems, what is invisible usually determines what survives.