Data doesn’t wait. In financial services, that’s not a philosophical statement. It’s an operational reality.

Three months before this pipeline reached its current scale, the organization was ingesting around 200 GB of data per day. By the time I was done, that number had climbed to 1.2 TB — a 20 to 25 percent jump every single month, driven almost entirely by new streaming sources coming online.

This was a large BFSI enterprise. Data in transit here isn’t just a technical concern; it’s a compliance obligation. Every byte had to be encrypted, traceable, and reliable from the moment it left the source system. That meant the architecture started not with NiFi, but with NGINX sitting at the entry point, handling SSL termination via a Wildcard certificate, so all incoming data was secured before it touched the processing layer. NiFi could stay focused on what it does best.

What sat behind that NGINX layer was the real challenge.

The Problem

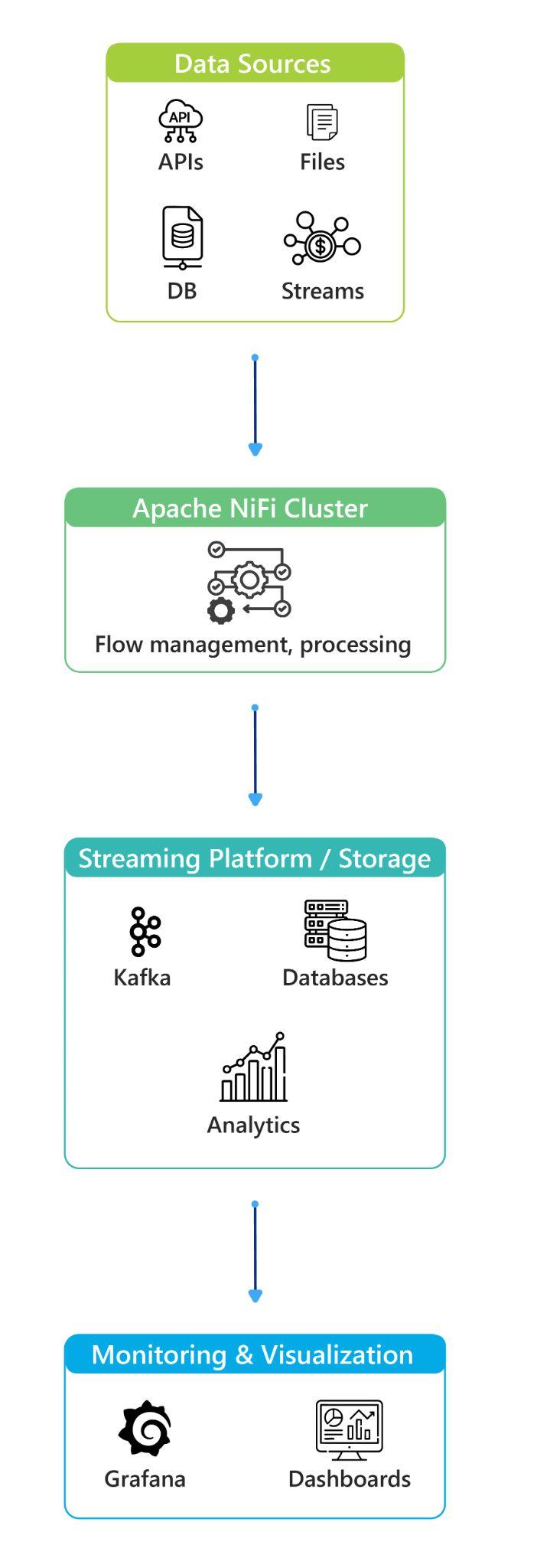

The organization was ingesting from four fundamentally different source types simultaneously:

- REST APIs generate event data every 1 to 15 minutes, hourly, or on demand

- SFTP file servers delivering data hourly, daily, or triggered by file arrival

- Relational databases running near-real-time, hourly, and daily batch extractions

- Streaming platforms send continuous data at a second or millisecond frequency

Each behaves differently. APIs have rate limits and retry logic. File sources are bursty — nothing for an hour, then everything at once. Databases need careful extraction windows. Streaming is relentless.

Handling one of these well is straightforward. Handling all four together, reliably, at a scale growing 20 percent every month — that’s where it gets interesting. For a BFSI organization, this data powers analytics platforms, monitoring systems, and operational applications where delays have real consequences.

This is the environment where Apache NiFi earned its place — not just as a data movement tool, but as a platform for controlling how data moves. Routing, backpressure, retry handling, scheduling, and visibility, all in one place. At Ashnik, choosing the right tool for the right scale is something we think about carefully. NiFi was the right fit here.

The Architecture

The NiFi cluster ran on AWS, deployed across three nodes coordinated by an external Apache ZooKeeper service. Infrastructure per node:

- OS: Ubuntu 24.04 Pro

- Java: OpenJDK 21.0.9, default JVM tuning

- CPU: 8 cores

- RAM: 62 GB

- Disk: 64 GB OS disk + 128 GB dedicated data disk

That 128 GB data disk would become important later.

ZooKeeper handled cluster coordination — leader election and state management — cleanly separated from NiFi. The full stack:

| Component | Version |

|---|---|

| Apache NiFi | 1.28 |

| Apache Airflow | 2.10.4 |

| NGINX | 1.24.0 |

| Grafana | 11.4.0 |

Rather than one large monolithic flow, we designed separate pipelines for each source type — APIs, files, databases, and streaming — each running independently. When streaming volumes spiked, we could tune that pipeline without touching database extraction settings. When file ingestion had issues, it didn’t cascade elsewhere. Within each pipeline, data moved through three consistent stages: ingestion via source-specific processors, routing based on data type and priority, and transformation before pushing to downstream systems.

External orchestration via Airflow triggered jobs at defined intervals:

| Source Type | Schedule |

|---|---|

| APIs | Every 5 to 15 minutes |

| Files | Triggered on file arrival |

| Databases | Hourly extraction |

| Streaming | Continuous |

Backpressure: The Part That Actually Keeps Pipelines Alive

In the early stages, I hit a wall. The platform could not process large volumes fast enough. During peak ingestion windows — particularly from streaming sources — queue sizes were growing 2 to 3x within short intervals. The pipeline wasn’t crashing dramatically. It was degrading quietly, and that’s the harder problem to catch.

The initial thresholds weren’t built for what this pipeline was actually handling. I had to tune.

For high-volume pipelines, FlowFile thresholds were increased to between 75,000 and 150,000, and queue size limits were adjusted to between 5 and 15 GB depending on pipeline load characteristics. When limits are reached, NiFi automatically slows upstream components — data waits rather than floods.

But tuning isn’t just about raising numbers. Every increase in queue size tolerance adds pressure on the disk. With a 128 GB data disk per node, shifting a bottleneck from memory to storage just moves the problem. Every tuning decision we made balanced both sides of that equation — processing capacity against disk utilization, carefully.

In a BFSI context, this kind of disciplined flow control is foundational. But backpressure does something else that often goes unnoticed: it tells you something. When queues are regularly brushing their limits even after tuning, the system is signaling it’s working harder than it should. That signal matters.

Watching these patterns closely is what led me to a larger architectural conversation.

What Backpressure Told Me: The Case for Kafka

As I monitored the pipeline, the pattern became impossible to ignore. Streaming sources were consistently driving queue depths toward their thresholds — not occasionally, but regularly. NiFi was holding by doing exactly what backpressure is designed to do: slow things down to stay in control. That’s the system working as designed. But it also signals a ceiling — and with volumes growing 20 percent month over month, that ceiling was approaching fast.

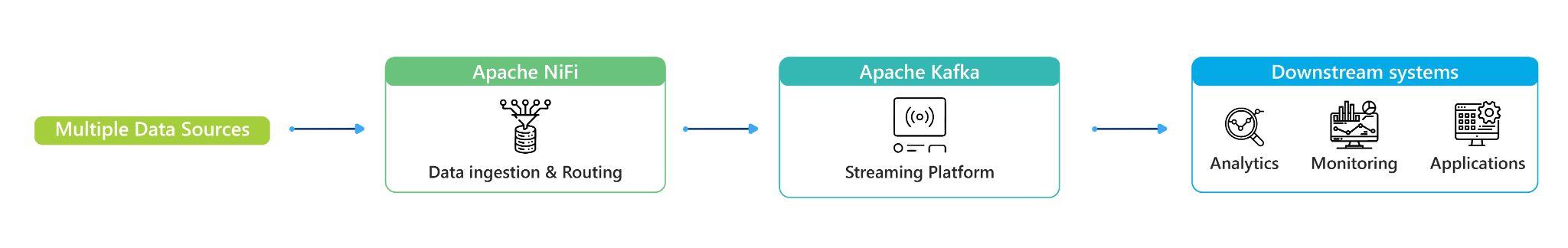

Our recommendation was to introduce Apache Kafka as a dedicated buffer layer between source systems and NiFi. Not to replace NiFi — but to give the architecture room to breathe.

The distinction is important. NiFi is strong at the edges — diverse sources, different protocols, routing, and transformation. Kafka is strong at absorption — high-throughput spikes, decoupling producers from consumers, ensuring bursts don’t propagate pressure downstream. Together, Kafka absorbs the spikes, NiFi processes with control, and backpressure thresholds become a safety net rather than a ceiling.

This recommendation came from our team operating the system under load, not from architecture diagrams. The pipeline was working. We saw how it could work better, and we said so.

What Operating at Scale Actually Looks Like

Clustered NiFi is powerful and operationally demanding. Here’s what I actually encountered:

Node sync issues after restarts. Nodes went out of sync and required manual intervention to stabilize. In a three-node setup, one inconsistent node affects the whole cluster.

Distributed execution inconsistencies. The same processor configuration behaved differently depending on which node executed it. Not a NiFi bug — a configuration discipline problem that only surfaces at scale.

Nodes are automatically disconnecting. Nodes dropped without warning and stopped appearing in the UI entirely. Diagnosing a node you can’t see requires knowing where to look beyond the interface.

Airflow reporting success while the backend was failing. Jobs showed as successful in Airflow while errors accumulated quietly in the backend. Without active monitoring of both layers, this kind of silent failure erodes pipeline trust fast.

Disk approaching capacity during high ingestion. Queue buildup during peak periods drove the 128 GB data disk toward its limits. This wasn’t theoretical — it happened. Alerting needs to be configured well before the threshold, not at it.

Grafana 11.4.0 dashboards gave me real-time visibility across cluster health, queue depths, and system performance throughout.

What Changed, and What It Reinforced

With backpressure properly tuned, monitoring tightened, and the Kafka recommendation in place, the pipeline’s behavior changed noticeably. Ingestion delays during peak load were reduced. The system became predictable — I knew what to expect during high-volume windows instead of reacting to surprises. Queue behavior stabilized, disk pressure became manageable, and the platform was positioned to scale with the business rather than behind it.

Operating a pipeline growing from 200 GB to 1.2 TB per day in three months, across four concurrent source types, in a BFSI environment where reliability is non-negotiable; it sharpened my instincts quickly. NiFi rewards careful architecture and disciplined monitoring. When those are in place, it handles complexity that would break simpler setups. When they’re not, the system tells you through queue depths, disk pressure, and nodes that quietly disappear from the UI.

The job isn’t just building the pipeline. It’s reading what the pipeline tells you, and knowing what to do about it. That’s what I took away from this.